Chapter 8: Closed-Loop Agentic Systems

Summary

While previous chapters addressed individual components of the agentic loop — planning, control, memory — this chapter covers research that integrates them into a closed-loop system. REFLECT teaches robots to explain failures, AutoRT safely manages robot fleets at scale, BUMBLE demonstrates an integrated agentic loop at building scale, and PragmaBot implements a complete agentic robot that learns from experience. This trajectory shows what Agentic Coding's code-execute-debug loop looks like in the physical world.

8.1 Introduction: Physically Implementing the Agentic Loop

Agentic Coding's loop is already complete. Claude Code automatically generates code, executes it, analyzes errors, and fixes them. This loop is production-ready thanks to the digital world's three properties analyzed in Chapter 1: deterministic execution, precise feedback, and full reversibility.

Implementing the same loop in the physical world makes every step fundamentally harder. Observations are incomplete due to sensor noise, execution is stochastic, failure causes are ambiguous, and reversal is impossible. The four papers in this chapter progressively implement the agentic loop despite these challenges.

8.2 REFLECT: Explaining Failures

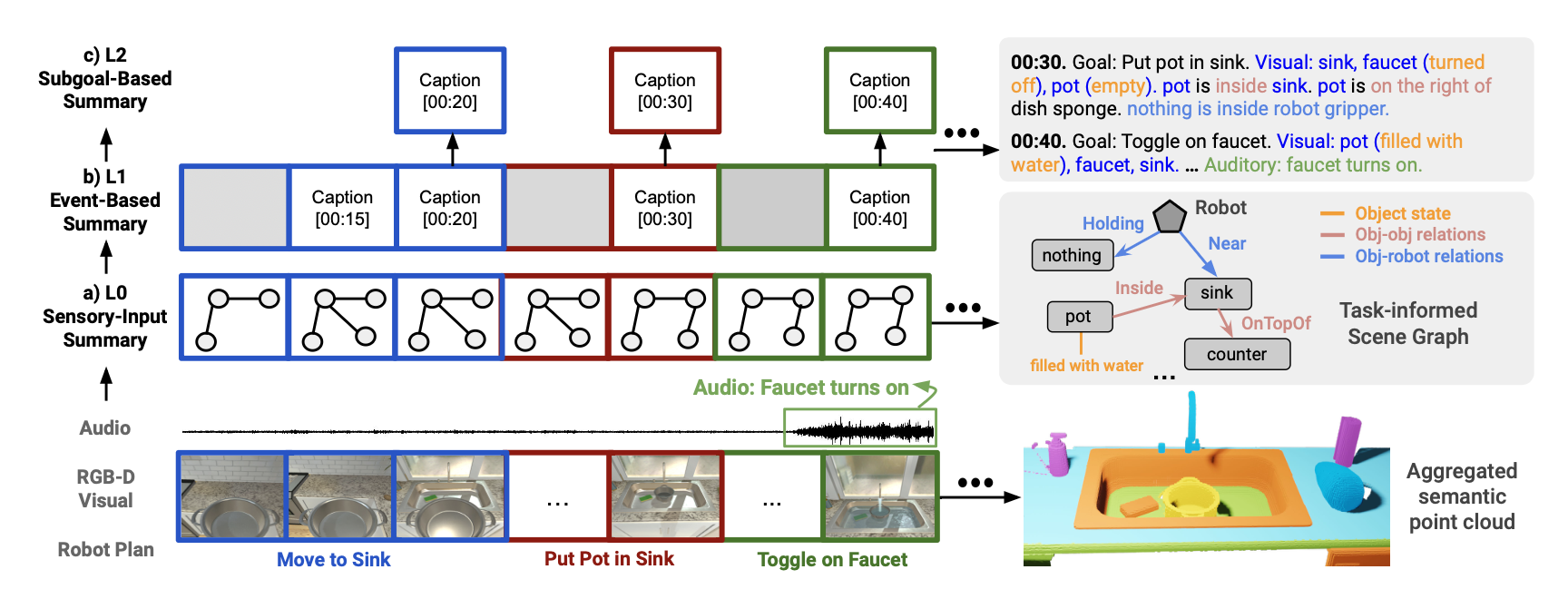

REFLECT [Liu et al., 2023] introduces a core agentic loop component — reflection — to robots. It generates hierarchical summaries of robot past experiences from multisensory observations and queries an LLM to explain failure causes.

The methodology proceeds in four stages: hierarchical experience summarization from multisensory data, Progressive Failure Explanation where the LLM progressively analyzes summaries to infer failure causes, correction plan generation based on failure explanations, and evaluation on the RoboFail Dataset.

REFLECT directly corresponds to Agentic Coding's error analysis, debugging, and fix loop. The key difference: code errors have relatively clear causes via stack traces and logs, while robot failures require inferring causes from visual, force, and position data across multiple sensory modalities. Determining "why it failed" is itself far harder for robots.

8.3 AutoRT: Safe Autonomy at Scale

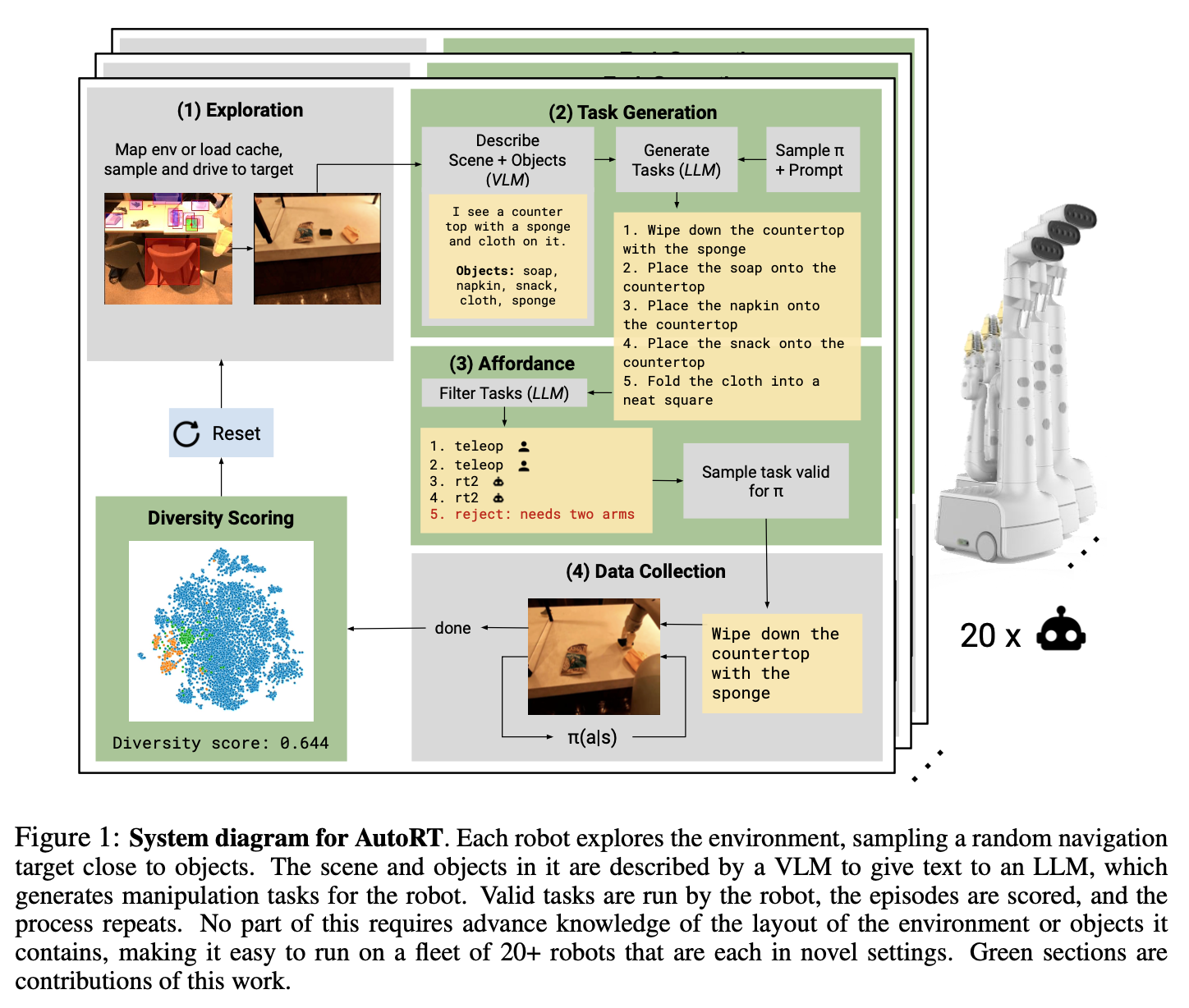

AutoRT [Brohan et al., 2024] elevates Agentic Robotics to a new scale. It autonomously manages multiple robots using VLM (scene understanding) + LLM (task proposal) + Robot Constitution.

Over 7 months across 4 buildings, 20+ robots were operated, collecting 77,000 demonstrations at a 1:5 human-to-robot supervision ratio.

The Robot Constitution, inspired by Asimov's Three Laws, hierarchically applies basic safety rules + embodiment constraints + task-specific restrictions. Rules like "do not throw objects at humans" and "remove packaging before microwaving food" are automatically generated by the LLM.

This corresponds to Agentic Coding's system prompts and safety guardrails. Just as Claude Code follows rules like "confirm before destructive operations" and "prevent security vulnerabilities," AutoRT's robots follow the Robot Constitution. The critical difference: safety violations in code can be reversed with git revert; a robot's physical accident cannot be undone. This irreversibility is the Robot Constitution's reason for existence.

8.4 BUMBLE: An Integrated Agent at Building Scale

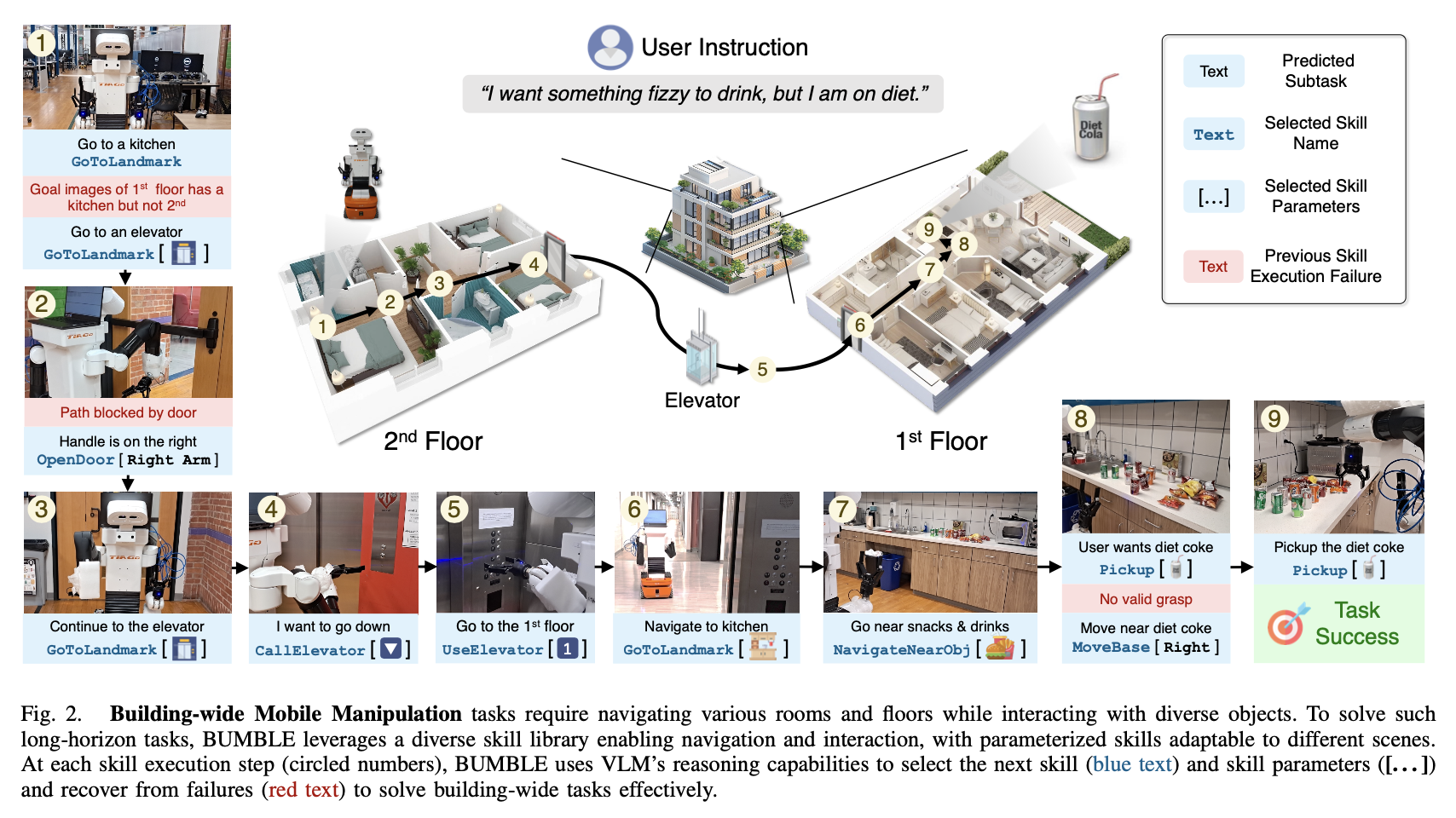

BUMBLE [Shah et al., 2024] is the most comprehensive agentic framework for building-wide mobile manipulation, integrating reasoning and action via VLM.

A single VLM manages RGBD perception, a manipulation skill library, and dual-layered memory. It accepts free-form language instructions, selects and executes parameterized skills, and replans upon failure detection.

Over 90+ hours of evaluation: 70 trials, 47.1% average success rate, maximum 12-step skill sequences, approximately 15 minutes per trial. Primary failure causes were VLM reasoning errors — collision prediction failures, incorrect object selection among 20-25 distractors, elevator button misrecognition.

The 47.1% success rate provides an honest assessment of the current state. While Agentic Coding has reached production-ready maturity, Agentic Robotics remains at the research prototype stage. The gap's core: VLM reasoning capability is the system's ceiling.

8.5 PragmaBot: Learning from Experience

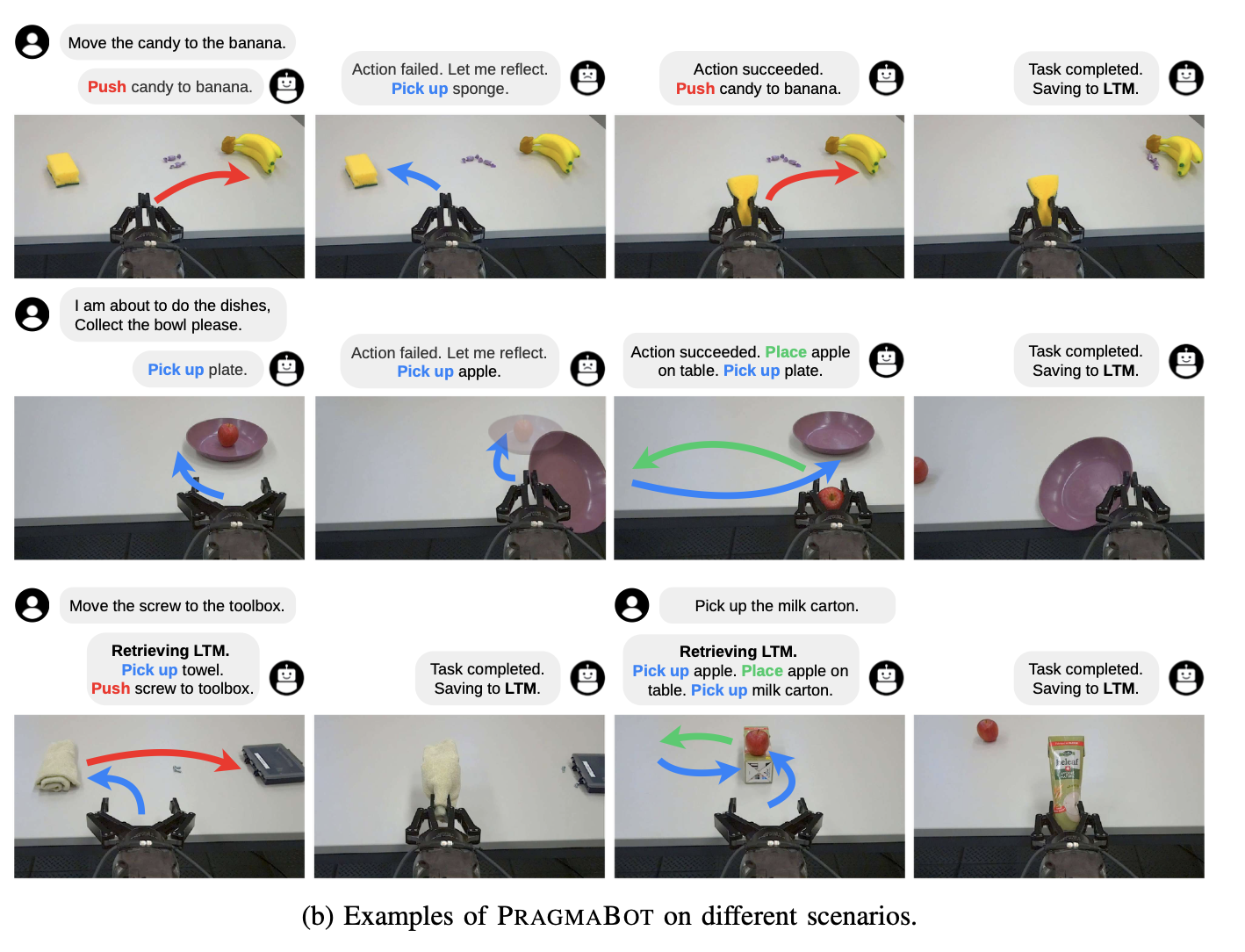

PragmaBot [2025] demonstrates the most complete form of the agentic loop. Through Verbal Reinforcement Learning, LLM agents learn from experience through self-reflection and few-shot learning without parameter updates.

The VLM serves as the robot's "brain" and "eyes" in three roles: (i) action planning, (ii) action success verification, (iii) experience summarization. STM tracks executed actions and feedback signals; LTM stores lessons from past successes; RAG retrieves relevant knowledge from LTM for similar tasks.

| Condition | Success Rate |

|---|---|

| Baseline (no STM) | 35% |

| STM-based self-reflection | 84% (2.4x) |

| LTM + RAG (new tasks) | 80% (single trial) |

| Naive prompting | 22% |

The STM improvement (35% to 84%) and LTM+RAG improvement on new tasks (22% to 80%) demonstrate that each agentic loop component — reflection, memory, retrieval — contributes meaningfully. The emergence of intelligent object interactions not anticipated by designers hints at the agentic loop's potential.

8.6 Evolution Stages of the Agentic Loop

| Stage | Period | Representative | Loop Structure |

|---|---|---|---|

| Open-loop | 2022 | SayCan, CaP | Plan, Execute (no feedback) |

| Reflection | 2023 | REFLECT | Plan, Execute, Reflect |

| Memory | 2024 | KARMA, BUMBLE | Plan, Execute, Reflect, Remember, Plan |

| Full closed-loop | 2025 | PragmaBot | Plan, Execute, Reflect, Remember, Learn, Plan |

Each stage adds precisely one core component, with PragmaBot completing the full closed loop through "learning from experience."

8.7 Comparison with Agentic Coding: Loop Speed and Cost

The loop structure is identical, but the physical world's non-determinism, irreversibility, and cost fundamentally change the difficulty of each step.

| Loop Stage | Agentic Coding | Agentic Robotics |

|---|---|---|

| Observe | File reads (ms, complete) | Cameras/sensors (noisy, partial) |

| Plan | Code generation (seconds) | Action sequence (seconds) |

| Execute | Code execution (ms, deterministic) | Physical action (minutes, stochastic) |

| Verify | Tests (ms) | Observation/simulation (minutes) |

| Reflect | Error analysis (stack traces) | Failure analysis (multisensory) |

| Remember | Context/files (instant) | STM/LTM/scene graphs |

| Revert | git revert | Impossible |

| Trial cost | ~Free | High |

PragmaBot's 35%-to-84% improvement shows the universal effectiveness of the agentic loop. BUMBLE's 47.1% reveals the physical world's additional challenges. The key is loop speed: Agentic Coding loops in seconds; PragmaBot loops in minutes. Per-iteration learning may be similar, but the difference in iterations per unit time determines final performance. Simulation acceleration (see Chapter 9) is the key strategy for closing this gap.

8.8 Open Problems and Outlook

First, balancing safety and autonomy. AutoRT's Robot Constitution is rule-based safety's beginning, but long-tail risks that cannot be enumerated by rules remain. A hierarchical safety architecture — hardware-level reflexive safety (immediate cutoff) combined with software-level reasoning-based safety (LLM judgment) — is needed.

Second, long-horizon cumulative error. Even 95% per-step success yields only 36% over 20 steps. BUMBLE's 47.1% reflects this. Like unit tests in code, designing mid-task verification checkpoints and dynamically determining replanning frequency are promising directions.

Third, real-time world models. Current VLAs are reactive — they act immediately from current observations. An internal world model predicting "what happens if I grasp this object this way?" in real time does not yet exist. GR00T N1's dual-system hints at this direction, but both prediction accuracy and speed are insufficient.

References

- Liu, Z. et al., "REFLECT: Summarizing Robot Experiences for Failure Explanation and Correction," arXiv:2306.15724, 2023. scholar

- Brohan, A. et al., "AutoRT: Embodied Foundation Models for Large Scale Orchestration of Robotic Agents," arXiv:2401.12963, 2024. scholar

- Shah, M. et al., "BUMBLE: Unifying Reasoning and Acting with Vision-Language Models for Building-wide Mobile Manipulation," arXiv:2410.06237, 2024. scholar

- PragmaBot, "A Pragmatist Robot: Learning to Plan Tasks by Experiencing the Real World," arXiv:2507.16713, 2025. scholar

- Wang, Z. et al., "KARMA: Augmenting Embodied AI Agents with Long-and-short Term Memory Systems," arXiv:2409.14908, 2024. scholar

- NVIDIA, "GR00T N1: An Open Foundation Model for Generalist Humanoid Robots," arXiv:2503.14734, 2025. scholar

- Ahn, M. et al., "Do As I Can, Not As I Say: Grounding Language in Robotic Affordances," arXiv:2204.01691, 2022. scholar