Chapter 4: The Rise of Vision-Language-Action Models

Summary

From the perspective of Agentic Robotics, VLA models are not merely an "end-to-end alternative." When Code as Policy generates a high-level command like pick(cup), who actually executes the low-level motor commands that move the robot arm? VLA is positioning itself as precisely this skill executor — the low-level motion primitive that agentic system code calls upon. This chapter traces the emergence and evolution of VLA, analyzing its role within the Agentic Robotics framework.

4.1 Introduction: When Code Needs Low-Level APIs

The LLM Planners and Code as Policy approaches of Chapters 2-3 share a common assumption: executable low-level skills already exist. SayCan selects from 551 predefined skills; CaP calls APIs like robot.pick() and robot.place(). But the policy that actually moves the motors behind these APIs must be supplied separately.

This parallels how in agentic coding, when an LLM calls requests.get(url), the HTTP library handles actual network communication. The difference is that network libraries are deterministic, while robot low-level motion is stochastic and environment-dependent. VLA is the attempt to make this "low-level execution layer" general-purpose.

Meanwhile, the structural limitations of modular pipelines also catalyzed VLA. When the LLM plans, a separate perception module recognizes the environment, and yet another module handles low-level control, information is lost at each module boundary. "What if a single model handled everything?" — the VLA paradigm was born from this question.

4.2 PaLM-E: The Promise of Giant Multimodal Models

PaLM-E [Driess et al., 2023] is a 562B-parameter embodied multimodal language model that integrates images, robot states, and text as multimodal tokens into PaLM 540B.

PaLM-E's key finding was positive transfer: jointly training across diverse domains (internet images, robot data) improved performance on each domain. As scale increased, embodied capabilities improved while language abilities were preserved. It achieved state-of-the-art on OK-VQA while also performing robot manipulation planning.

However, PaLM-E was an "embodied LM," not a VLA — it generated text-format plans rather than directly outputting actions. At 562B, real-time robot control was impractical. PaLM-E's true contribution was demonstrating that web knowledge can transfer to robot capabilities, directly laying the groundwork for RT-2.

4.3 RT-2: Establishing the VLA Paradigm

RT-2 [Brohan et al., 2023] is the paper that effectively established the VLA concept. The core idea is simple yet powerful: include robot actions in the VLM's output token space.

RT-2 co-fine-tunes PaLI-X (55B) or PaLM-E (12B) on robot trajectory data and internet VL data. Robot actions are encoded as text tokens — for example, "1 128 91 241 5 101 127" representing end-effector position and gripper state. Natural language responses and robot actions are learned in the same token space.

Across 6,000 evaluation trials, RT-2's most impressive results were its emergent capabilities:

- Novel Object Generalization: meaningful generalization to objects absent from training data

- Semantic Reasoning: "pick up an object that could be used as an improvised hammer" — selects a rock

- Chain-of-Thought: multi-step reasoning like "select a drink suitable for a tired person"

This was fundamental evidence that visual-language knowledge learned from the web transfers directly to robot control.

RT-2's limitations — 55B model latency, simple binning-based action tokenization limiting precision, and restricted data diversity — each determined subsequent research directions.

4.4 The Explosion of the Open-Source VLA Ecosystem

In 2024, VLAs were democratized. This transition directly parallels the GPT-4 (closed) to Llama (open-source) shift in the LLM world.

Open X-Embodiment: Building the Data Hub

The Open X-Embodiment Collaboration [2023] released the largest cross-embodiment dataset to date: 22 robot embodiments, 527 skills, and over 1 million trajectories, contributed by 21 institutions and 150+ researchers. RT-1-X (35M) and RT-2-X (55B) were trained on this data, demonstrating positive transfer at scale — data from other robots improves each robot's performance.

Octo: The Open-Source Baseline

Octo [Ghosh et al., 2024] is an open-source generalist policy trained on 800K trajectories from Open X-Embodiment. Combining a Transformer-based diffusion policy architecture with readout tokens and action chunking, it works out-of-the-box on 9 robot platforms and achieves 55% success rate on WidowX after fine-tuning.

OpenVLA: The 7B Breakthrough

OpenVLA [Kim et al., 2024] is a turning point. Combining Llama 2 (7B) + DINOv2 + SigLIP and trained on 970K real robot demos, it achieves +16.5% success rate over RT-2-X (55B) with 1/8 the parameters. LoRA fine-tuning enables adaptation on consumer GPUs, and MIT licensing has driven widespread community adoption.

TinyVLA: Extreme Compression

TinyVLA [2024] combines a sub-1B VLM with a diffusion head to achieve +25.7% success over OpenVLA demonstrating robustness across language, object, position, appearance, background, and environment generalization dimensions.

4.5 The Action Representation Revolution: Flow Matching and FAST

RT-2's action tokenization — discretizing continuous actions into token bins — is training-efficient but precision-limited. Two directions emerged to address this.

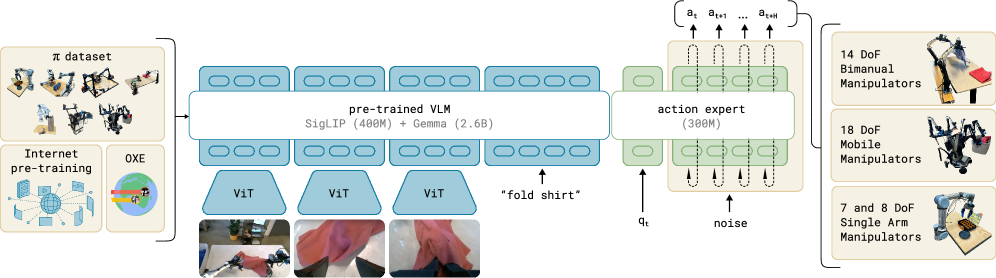

pi0: Flow Matching VLA

pi0 [Black et al., 2024] from Physical Intelligence builds a flow matching architecture on top of a pretrained VLM, generating continuous, precise actions rather than discrete tokens.

pi0's most impressive results are in dexterous manipulation: shirt folding, table clearing, grocery packing, and toast retrieval consistently outperform OpenVLA and Octo.

pi0.5: Extending to the Open World

pi0.5 [Physical Intelligence, 2025] extends pi0 to perform long-horizon tasks like kitchen and bedroom cleaning in completely new homes absent from training data — the first end-to-end learned robot system to achieve open-world generalization, combining heterogeneous co-training with subtask prediction.

FAST: Revolutionizing Action Tokenization

FAST [2025] replaces RT-2's simple binning with DCT-based action tokenization, enabling high-frequency precise control. Combined with pi0, it scales to 10,000 hours of robot data while matching diffusion VLAs and reducing training time by up to 5x.

4.6 GR00T N1: The Dual-System VLA

GR00T N1 [NVIDIA, 2025] is a key milestone showing the direction of VLA evolution. It explicitly implements a Dual-System Architecture:

- System 2 (VLM): Slow reasoning for environment interpretation and language instruction understanding

- System 1 (Diffusion Transformer): Fast action for real-time motor behavior generation

The two modules are jointly trained end-to-end. GR00T-N1-2B openly releases model checkpoints, training data, and benchmarks. The Dual-System provides a structural answer to the scale-vs-speed tradeoff across all VLAs discussed in this chapter (see Chapter 5 for detailed analysis of hierarchical approaches).

4.7 The Five Design Axes of VLA

Five key design axes run through 2024-2025 VLA research:

| Design Axis | Options | Representative Models |

|---|---|---|

| Action representation | Discrete token / Flow matching / Diffusion | RT-2 / pi0 / Octo |

| Model size | 55B / 7B / <1B | RT-2-X / OpenVLA / TinyVLA |

| Pretraining data | Web+robot / Robot only / Simulation | RT-2 / Octo / GR00T N1 |

| Architecture | Monolithic / Hierarchical / Dual-system | RT-2 / HAMSTER / GR00T N1 |

| Open-source | Closed / Open | RT-2, pi0 / OpenVLA, Octo, GR00T N1 |

4.8 Comparison with Agentic Coding: Where Does VLA Fit in the Agentic Framework?

The key question of this chapter is not "Does VLA replace modular systems?" but rather "What role does VLA play in Agentic Robotics?"

The distinction is clear: Large Models (VLA) are fast but fail; System-Level Orchestration is slow but robust. VLA alone suffers from cascading errors in long-horizon tasks (BUMBLE at 47.1%), but when combined with agentic orchestration, it can reliably execute individual subtasks. GR00T N1's Dual-System provides the structural answer — System 2 (VLM) plans, System 1 (VLA) executes.

The asymmetry with Agentic Coding also makes sense in this context. In Agentic Coding, the LLM generates code, and the Python runtime executes it. The intermediate representation (code) is interpretable. In Agentic Robotics, the orchestrator (LLM/VLM) plans, and VLA executes. But VLA's intermediate representations are opaque neural activations. Code as Policy (→ Chapter 3) attempts to bridge this gap, and as CaP-X demonstrated, agentic scaffolding (test-time computation) can compensate for this opacity.

This matters for three reasons. First, debugging difficulty — when a code agent produces wrong output, you can read the generated code; when a VLA produces wrong actions, interpreting internal representations is nearly impossible. Second, the basis of generalization — code naturally inhabits the same token space as natural language, but robot actions require artificial tokenization. Third, data asymmetry — LLMs train on trillions of tokens; the largest robot dataset (Open X-Embodiment) has just over 1 million trajectories.

4.9 Open Problems and Outlook

Five fundamental limitations face the VLA paradigm: the unresolved action tokenization-vs-continuous debate, the scale-vs-speed tradeoff, the data bottleneck (collecting robot data costs orders of magnitude more than scraping web text), the still-limited scope of open-world generalization, and the absence of safety mechanisms for models that directly output actions.

The Hybrid Future: The ultimate direction is neither standalone VLA nor pure modular systems, but a hybrid. Combining classical TAMP with VLM reasoning (where VLM intervenes triggered by frame-level progress monitoring), and distilling System-Level Orchestration knowledge into VLA to achieve both speed and robustness, are promising approaches. This mirrors how in Agentic Coding, the LLM generates code while type checkers and linters provide real-time correction — fast generation (VLA) combined with rigorous verification (TAMP/orchestration).

These limitations drive the research directions explored in Chapters 5 (hierarchical planning), 8 (safety via Robot Constitution), and 9 (sim-to-real for data augmentation).

References

- Driess, D. et al., "PaLM-E: An Embodied Multimodal Language Model," arXiv:2303.03378, 2023. scholar

- Brohan, A. et al., "RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control," arXiv:2307.15818, 2023. scholar

- Open X-Embodiment Collaboration, "Open X-Embodiment: Robotic Learning Datasets and RT-X Models," arXiv:2310.08864, 2023. scholar

- Ghosh, D. et al., "Octo: An Open-Source Generalist Robot Policy," arXiv:2405.12213, 2024. scholar

- Kim, M. J. et al., "OpenVLA: An Open-Source Vision-Language-Action Model," arXiv:2406.09246, 2024. scholar

- Black, K. et al., "π0: A Vision-Language-Action Flow Model for General Robot Control," arXiv:2410.24164, 2024. scholar

- Physical Intelligence, "π0.5: A Vision-Language-Action Model with Open-World Generalization," arXiv:2504.16054, 2025. scholar

- NVIDIA, "GR00T N1: An Open Foundation Model for Generalist Humanoid Robots," arXiv:2503.14734, 2025. scholar

- FAST, "Efficient Action Tokenization for Vision-Language-Action Models," arXiv:2501.09747, 2025. scholar

- TinyVLA, "TinyVLA: Towards Fast and Data-Efficient Vision-Language-Action Models," arXiv:2409.12514, 2024. scholar

- Chi, C. et al., "Diffusion Policy: Visuomotor Policy Learning via Action Diffusion," arXiv:2303.04137, 2023. scholar

- Khazatsky, A. et al., "DROID: A Large-Scale In-The-Wild Robot Manipulation Dataset," arXiv:2403.12945, 2024. scholar

- Brohan, A. et al., "AutoRT: Embodied Foundation Models for Large Scale Orchestration of Robotic Agents," arXiv:2401.12963, 2024. scholar

- "What Matters in Building Vision-Language-Action Models," arXiv:2412.14058, 2024. scholar