Chapter 3: Code as Policies — Programming Robot Control

Summary

When the limitations of natural language planning became apparent, researchers turned to code. Code offers precise numerical specification, control flow, third-party library access, and executability. Since Code as Policies (2022) named and established this paradigm, it has evolved from "code as API glue" to "code as reasoning tool" to "agentic code systems." This trajectory is the robotic paradigm most directly analogous to Agentic Coding.

3.1 Introduction: Why Code

The LLM Planners of Chapter 2 output natural language. "Place the cup on the left side of the table" is intuitive for humans but ambiguous for robots. Where exactly is "the left side"? How many centimeters from the table edge? At what angle should the cup be placed?

Code eliminates this ambiguity. place(cup, position=(0.3, -0.1, 0.8), orientation=(0, 0, 0)) specifies exact values. Conditionals (if obj.color == "red") and loops (for obj in objects) express control flow. Libraries like NumPy and Shapely perform spatial-geometric reasoning. And critically, code can be executed and its results observed.

This insight is the core of the Code as Policies paradigm, and simultaneously the point where Agentic Coding and Agentic Robotics come closest together.

3.2 Code as Policies: Naming the Paradigm

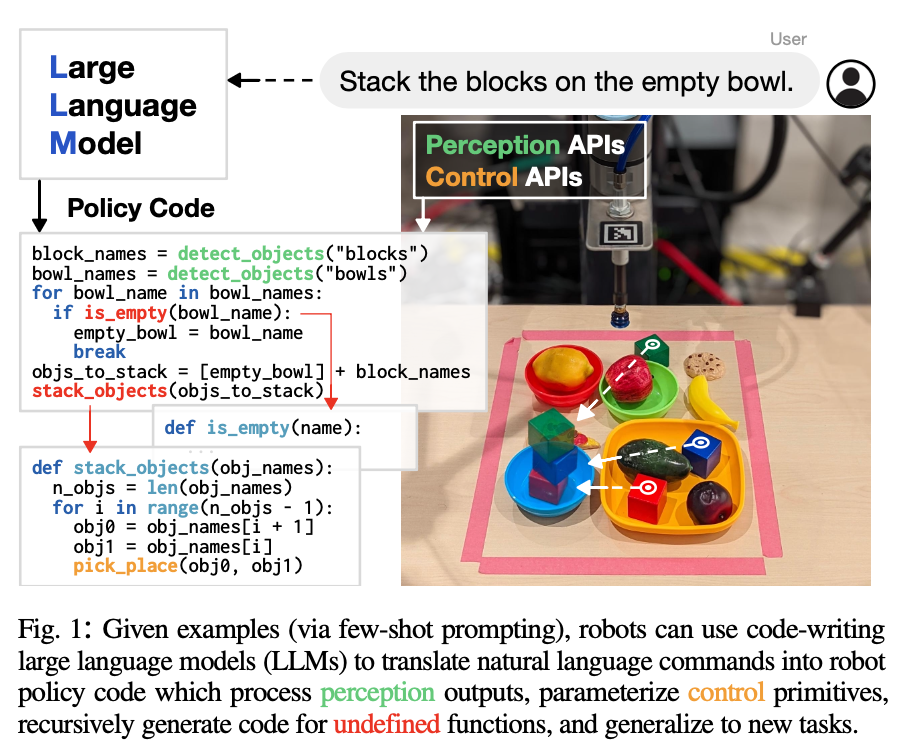

CaP [Liang et al., 2022] established the paradigm of using LLMs to directly generate robot policy code from natural language commands. Given few-shot examples pairing natural language commands with corresponding policy code, the LLM generates code that processes object detector outputs and parameterizes control primitive APIs for new commands.

The key design principle is hierarchical code generation — decomposing complex tasks into function call hierarchies, maintaining appropriate abstraction at each level. The system also references third-party libraries like NumPy and Shapely for spatial-geometric reasoning.

On seen instructions and attributes in simulation, CaP achieved over 90% success rate and outperformed supervised IL baselines on unseen instructions. Real robot demonstrations included mobile robot kitchen navigation, robotic arm shape drawing, pick-and-place, and tabletop manipulation.

CaP's limitations were also clear. Abstraction level mismatch — performance degraded when new commands differed in abstraction level from the prompt examples. No error recovery — there was no automatic correction mechanism when generated code failed at execution. API dependency — perception and control primitive APIs had to be pre-designed. All three limitations reduce to the same root cause: the absence of an agentic loop. Code was generated, but there was no loop to observe execution results and iterate.

3.3 Code-as-Symbolic-Planner: Code That Reasons

[Chen et al., 2025] extended CaP's "code as API calls" to "code as reasoning tool." Instead of using code as mere glue between APIs, LLMs are guided to use code as a solver, planner, and checker.

The core idea combines text-based reasoning (common sense) with code generation (symbolic computing). The LLM generates optimization code to solve constrained planning problems, and the generated code also serves as a constraint checker, forming a self-verification loop.

The result was a 24.1% average improvement in success rate over the best baseline, with particularly strong scalability on high-complexity tasks. This converges with AutoTAMP's [Chen et al., 2023] idea of "using the LLM as translator and checker" (see Chapter 5).

This evolution is already routine in Agentic Coding. When an LLM generates a regex to validate a pattern or writes a script to analyze data, it is using "code as a tool for thought." Code-as-Symbolic-Planner applies this same pattern to robot TAMP.

3.4 CaP-X: When Coding Agents Meet Robots

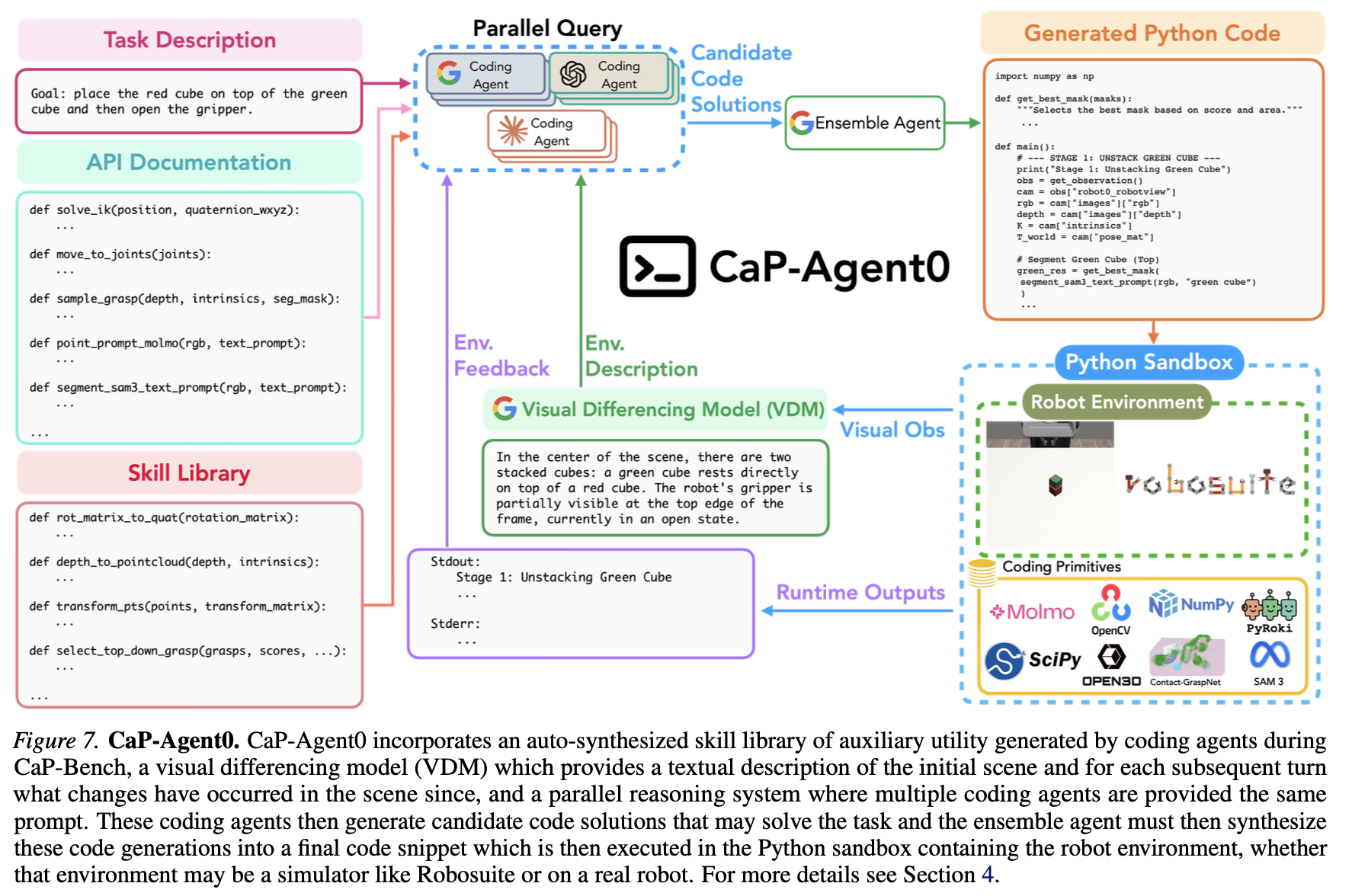

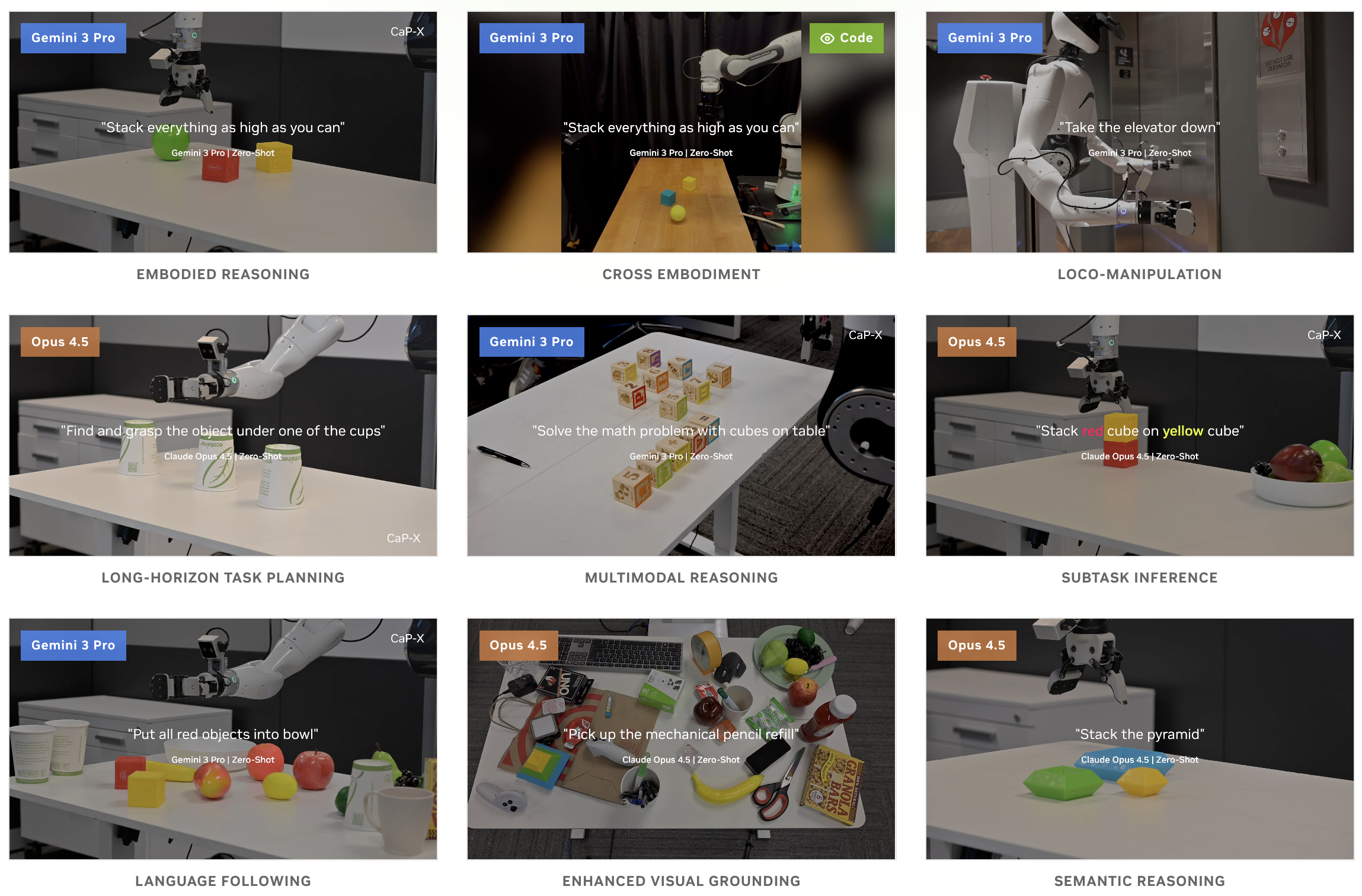

CaP-X [Fu et al., 2026] provides an open-source framework for systematically studying and benchmarking Code-as-Policy agents. It offers the most comprehensive evaluation framework in the field and serves as the most direct bridge between Agentic Coding and Agentic Robotics.

CaP-X comprises four components:

CaP-Gym integrates 187 tasks from RoboSuite, LIBERO-PRO, BEHAVIOR, and other environments. CaP-Bench evaluates agents along three axes: abstraction level (human-crafted macros to atomic primitives), temporal interaction (zero-shot versus multi-turn), and perceptual grounding (visual feedback modality). CaP-Agent0 achieves human-level reliability on multiple manipulation tasks without any training. CaP-RL improves success rates through RL with verifiable rewards.

CaP-X's key finding from evaluating 12 models is among the most important insights in this field: performance scales with human-crafted abstraction, but drops sharply when these pre-defined abstractions are removed. Crucially, this gap can be closed through agentic scaffolding — multi-turn interaction, structured execution feedback, visual differencing, automatic skill synthesis, and ensembled reasoning recover performance even at the low-level primitive level.

3.5 Code, Natural Language, and Direct Actions: Three Approaches Compared

At this point, comparing three approaches to robot control is instructive.

| Dimension | Code as Policy | Natural Language | VLA (Direct Action) |

|---|---|---|---|

| Precision | High (numerical spec) | Medium | High (continuous) |

| Generalization | High (new API combos) | High (language flexibility) | Low (data-dependent) |

| Interpretability | Very high | High | Low |

| Real-time response | Low (generate + execute) | Low | High |

| New environment adaptation | Possible with API | Possible with description | Requires retraining |

RL-GPT [2024] attempted a hybrid combination, proposing a two-tier hierarchical framework with a code-based "slow agent" and an RL-based "fast agent." Codeable high-level actions are handled through code, while actions requiring precise control use RL. In Minecraft, it achieved diamond acquisition within one day on a single RTX3090, but transfer to real robots was not validated.

Natural Language as Policies (NLaP) [Mikami et al., 2024] explored the opposite direction — using natural language itself as coordinate-level control commands without code. It falls short of code-based approaches on precise control but probes the possibility of a code-free path.

3.6 Comparison with Agentic Coding: The Fundamental Difference in Execution Environment

Code as Policies is the robotic paradigm most directly analogous to Agentic Coding. Just as Claude Code generates Python/bash code, executes it, and observes the results, CaP has an LLM generate robot control code for execution. But beneath this structural similarity lies a fundamental difference.

| Dimension | Agentic Coding | Code as Policy (Robotics) |

|---|---|---|

| Execution environment | Deterministic (computer) | Stochastic (physical world) |

| Error feedback | Precise (stack traces) | Ambiguous (sensor noise, partial observation) |

| Execution cost | Nearly free, instant | High: time, energy, safety risk |

| Reversibility | git revert, undo |

Irreversible (broken objects, collisions) |

| API design | Rich ecosystem | Manual design required |

| Testing | Instant unit/integration tests | Sim2real gap |

CaP-X's key finding quantifies this difference precisely. Without human-crafted abstraction, performance drops sharply, but agentic scaffolding can close the gap. This same pattern appears in Agentic Coding: working with raw system calls instead of well-designed APIs is difficult, but an agent that iteratively tries and receives feedback can eventually solve the problem.

The implication is profound. Agentic test-time computation can substitute for human-crafted abstraction. In the long run, instead of pre-designed APIs or skill libraries, agents might discover and build their own abstractions through iterative trial and error. The critical barrier is that the cost of this trial and error in the physical world exceeds that of the digital world by orders of magnitude.

3.7 Open Problems and Outlook

The central open problem for the Code as Policy paradigm is the physical cost of the agentic loop.

In Agentic Coding, the code-generate, execute, analyze-error, fix loop runs in seconds and can be repeated virtually without limit. The agentic scaffolding CaP-X demonstrated fundamentally depends on this unlimited iteration. But in robot environments, each iteration incurs physical time (minutes), energy consumption, safety risks, and irreversible environmental changes.

Three directions address this problem:

First, agentic loops within simulation. As CaP-RL demonstrated, performing the agentic loop in simulation and transferring results to the real world is viable. The sim2real gap remains a bottleneck, but SIMPLER [Li et al., 2024] and Natural Language Sim2Real [2024] are narrowing it (see Chapter 9).

Second, strengthening code-level verification. Code-as-Symbolic-Planner's approach of "code as checker" validates plan feasibility at the code level before physical execution. Combined with AutoTAMP's formal verification, this can filter out high-failure-probability plans in advance (see Chapter 5).

Third, incremental abstraction construction. CaP-X's finding that "agentic scaffolding can substitute for human-crafted abstraction" suggests a path where robots build their own skill libraries through iterative experience. PragmaBot's [2025] experience-based learning represents an early step in this direction (see Chapter 8).

Code as Policy is the most direct attempt to transplant Agentic Coding's success formula into robotics. For this transplant to be complete, designing an efficient agentic loop adapted to the cost structure of the physical world is essential.

References

- Liang, J. et al., "Code as Policies: Language Model Programs for Embodied Control," arXiv:2209.07753, 2022. scholar

- Chen, Y. et al., "Code-as-Symbolic-Planner: Foundation Model-Based Robot Planning via Symbolic Code Generation via Symbolic Computing," arXiv:2503.01700, 2025. scholar

- Fu, M. et al., "CaP-X: A Framework for Benchmarking and Improving Coding Agents for Robot Manipulation," arXiv:2603.22435, 2026. scholar

- Chen, Y. et al., "AutoTAMP: Autoregressive Task and Motion Planning with LLMs as Translators and Checkers," arXiv:2306.06531, 2023. scholar

- Li, X. et al., "Evaluating Real-World Robot Manipulation Policies in Simulation (SIMPLER)," arXiv:2405.05941, 2024. scholar

- RL-GPT, "Integrating Reinforcement Learning and Code-as-Policy," arXiv:2402.19299, 2024. scholar

- Mikami, Y. et al., "Natural Language as Policies: Reasoning for Coordinate-Level Embodied Control with LLMs," arXiv:2403.13801, 2024. scholar

- Lang4Sim2Real, "Natural Language Can Help Bridge the Sim2Real Gap," arXiv:2405.10020, 2024. scholar

- Huang, W. et al., "Language Models as Zero-Shot Planners," arXiv:2201.07207, 2022. scholar

- Ahn, M. et al., "Do As I Can, Not As I Say: Grounding Language in Robotic Affordances," arXiv:2204.01691, 2022. scholar

- Chi, C. et al., "Diffusion Policy: Visuomotor Policy Learning via Action Diffusion," arXiv:2303.04137, 2023. scholar