Chapter 5: Hierarchical Planning — From High-Level to Low-Level

Summary

Between the instruction "make a sandwich" and joint torque commands lies a vast abstraction gap. LLM Planners excel at high-level planning but cannot perform precise motor control; VLAs directly output actions but struggle with complex reasoning. This chapter examines four approaches to hierarchically bridging these two worlds: formal specification (AutoTAMP), language motion (RT-H), real-time feedback integration (Hi Robot), and off-domain data utilization (HAMSTER).

5.1 Introduction: Why Hierarchy Is Necessary

In Agentic Coding, hierarchical abstraction comes naturally. Given "fix this bug," an agent first explores relevant files (high-level), identifies the specific function (mid-level), and edits code (low-level). Each level's actions are discrete and interpretable, with IDE autocomplete handling low-level details.

In robotics, this hierarchy is not self-evident. The distance from the high-level instruction "pick up the cup" to continuous torque commands across 7 degrees of freedom is far greater than in coding. And what to use as the intermediate representation — formal logic? language motion? 2D paths? atomic commands? — is a critical design decision.

Five reasons consistently cited across all hierarchical approaches: (1) abstraction level mismatch, (2) data efficiency through separate training, (3) off-domain data utilization, (4) ease of human intervention, and (5) debuggability and interpretability.

5.2 AutoTAMP: The Formal Verification Path

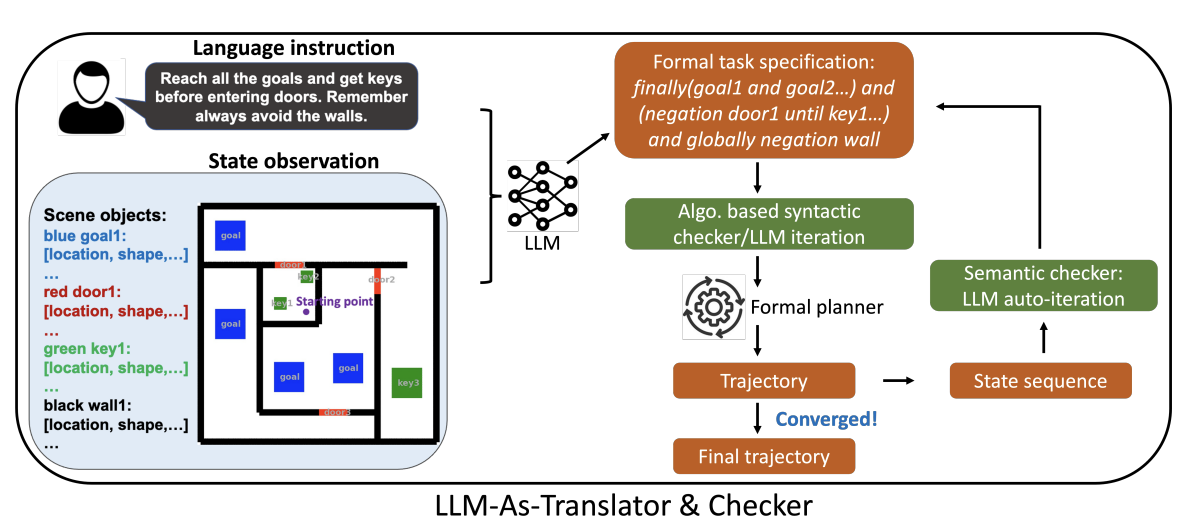

AutoTAMP [Chen et al., 2023] uses the LLM not as a direct planner but as a translator from natural language to formal specifications (Signal Temporal Logic, etc.) and a checker. The key insight: "it is better to use the LLM as a preprocessor and verifier for planners than as the planner itself."

The LLM translates natural language task descriptions into formal representations like STL, which traditional TAMP algorithms consume to produce task and motion plans. Autoregressive Re-prompting automatically detects and corrects both syntactic and semantic errors.

Results were strong: GPT-4 + AutoTAMP achieved 82.5-87.7% on single-agent tasks and 100% on multi-agent tasks with full AutoTAMP, significantly outperforming direct LLM planning on geometrically and temporally constrained tasks.

AutoTAMP's core idea — "LLM as translator, classical methods as executor" — was directly inherited by Code-as-Symbolic-Planner [Chen et al., 2025] (see Chapter 3).

5.3 RT-H: Language as Intermediate Representation

RT-H [Belkhale et al., 2024] proposes a completely different intermediate representation: language motion. Fine-grained language descriptions like "move arm forward" and "close gripper" bridge high-level tasks and low-level actions.

The key insight is that semantically different tasks may share similar low-level motions. "Pick up the cup" and "pour from the bottle" are entirely different tasks, but "extend arm forward" is a shared low-level motion. Language motion makes this shared structure explicit, improving data efficiency.

RT-H additionally enables in-execution human correction — users can modify robot behavior in real-time at the language motion level, naturally integrating corrections like "not that one, move left."

5.4 Hi Robot: Alignment with Human Intent

Hi Robot [Shi et al., 2025] adds real-time human feedback integration to hierarchical VLAs. A high-level VLM interprets current observations and user utterances to generate atomic commands ("grasp the cup"), which a low-level policy executes.

Hi Robot's core contribution is real-time response to user corrections like "not that one." Evaluated on single-arm, bimanual, and mobile platforms across scenario-based tasks (table clearing, sandwich making, grocery shopping), it outperformed both API-based VLMs and flat VLAs on human intent alignment and task success.

5.5 HAMSTER: Leveraging Off-Domain Data

HAMSTER [Li et al., 2025] delivers a key message: hierarchical separation enables not just abstraction but off-domain data utilization.

A high-level VLM predicts 2D end-effector paths from RGB images and task descriptions, while a low-level 3D-aware policy performs precise manipulation along these paths. Critically, the high-level VLM can be fine-tuned with action-free videos, hand-drawn sketches, and simulation data — cheap alternatives to expensive robot data.

On real robots, HAMSTER achieved +20% average success rate over OpenVLA (7 generalization axes), a 50% relative improvement, demonstrating that off-domain data can overcome gaps in embodiment, dynamics, visual appearance, and task semantics.

5.6 Four Forms of Hierarchical Separation

Comparing the four approaches:

| Form | High-Level | Intermediate | Low-Level | Core Strength |

|---|---|---|---|---|

| Formal spec (AutoTAMP) | LLM → STL/PDDL | Formal logic | TAMP solver | Verifiability |

| Language motion (RT-H) | Task instruction | Language motion | Action output | Data efficiency, human correction |

| Atomic command (Hi Robot) | VLM → atomic cmd | Atomic commands | Low-level policy | Real-time human feedback |

| 2D path (HAMSTER) | VLM → 2D path | 2D path | 3D-aware policy | Off-domain data utilization |

GR00T N1's Dual-System Architecture (see Chapter 4) implements this hierarchical separation at the architecture level: System 2 (VLM) corresponds to the high-level of Hi Robot/HAMSTER, System 1 (Diffusion Transformer) to the low-level policy.

5.7 Comparison with Agentic Coding: The Naturalness of Abstraction

Understanding why hierarchical separation is so difficult in robotics but natural in Agentic Coding reveals a fundamental difference.

In Agentic Coding, abstraction layers are intrinsic to programming languages. Functions, classes, modules, packages — each level of abstraction is built into language design, and IDE autocomplete smoothes transitions between layers. The path from high-level intent ("build an HTTP server") to low-level implementation (socket.bind()) is well-paved by frameworks and libraries.

In robotics, these abstractions must be manually designed. RT-H's language motion, HAMSTER's 2D paths, Hi Robot's atomic commands — each is a researcher-designed intermediate representation. CaP-X's discovery of "dependence on human-crafted abstraction" (see Chapter 3) points precisely to this problem.

AutoTAMP's formal verification approach is particularly interesting. The pattern "LLM generates code, linter/compiler/tests verify" from Agentic Coding is structurally identical to "LLM generates formal spec, TAMP solver verifies" from AutoTAMP. The difference: code verification has a mature toolchain (type checkers, linters, test frameworks), while robot TAMP verification is still nascent.

5.8 Open Problems and Outlook

The most fundamental open problem is determining the optimal number of layers and intermediate representation. Currently 2-3 layers predominate, but complex long-horizon tasks may require more. The optimal choice of intermediate representation (language? code? 2D path? formal logic?) may be task- and environment-dependent.

The second problem is inter-layer information loss. Information inevitably degrades as it passes through abstraction layers. VLM inference latency becoming a system bottleneck in Hi Robot illustrates how interface design between layers determines overall system performance.

A promising convergence direction is code-based hierarchical planning. Combining Code-as-Symbolic-Planner's "code as solver/planner/checker" with HAMSTER/Hi Robot's "VLM high-level, policy low-level" structure could let code serve as a verifiable intermediate representation that simultaneously handles information transfer and verification between layers.

References

- Chen, Y. et al., "AutoTAMP: Autoregressive Task and Motion Planning with LLMs as Translators and Checkers," arXiv:2306.06531, 2023. scholar

- Belkhale, S. et al., "RT-H: Action Hierarchies Using Language," arXiv:2403.01823, 2024. scholar

- Shi, L. X. et al., "Hi Robot: Open-Ended Instruction Following with Hierarchical Vision-Language-Action Models," arXiv:2502.19417, 2025. scholar

- Li, J. et al., "HAMSTER: Hierarchical Action Models for Open-World Robot Manipulation," arXiv:2502.05485, 2025. scholar

- NVIDIA, "GR00T N1: An Open Foundation Model for Generalist Humanoid Robots," arXiv:2503.14734, 2025. scholar

- Chen, Y. et al., "Code-as-Symbolic-Planner: Foundation Model-Based Robot Planning via Symbolic Code Generation," arXiv:2503.01700, 2025. scholar

- Kim, M. J. et al., "OpenVLA: An Open-Source Vision-Language-Action Model," arXiv:2406.09246, 2024. scholar

- Fu, M. et al., "CaP-X: A Framework for Benchmarking and Improving Coding Agents for Robot Manipulation," arXiv:2603.22435, 2026. scholar