Chapter 6: Low-Level Control — Diffusion Policy and 3D Representations

Summary

If high-level planning decides "what to do," low-level control decides "how to do it." This chapter covers the physical frontier of robot control. Diffusion Policy established the standard for stochastic action generation, 3D Diffuser Actor integrated 3D spatial representations into policies, and DROID built the foundation for generalization through large-scale in-the-wild data. This low-level domain has no counterpart in Agentic Coding — it is the challenge unique to the physical world.

6.1 Introduction: Between Deterministic Execution and Stochastic Policies

In Agentic Coding, execution is deterministic. print("hello") always outputs "hello." In robotics, executing "pick up the cup" is stochastic. The same command under the same initial conditions yields different results due to subtle physical variations. Contact dynamics follow chaotic dynamics sensitive to initial conditions, and environmental non-stationarity (temperature, humidity, wear) continuously alters conditions.

Designing control policies in this stochastic world is a fundamentally different problem from writing code. There may be multiple correct answers (multimodal distributions), failure costs are irreversible, and real-time response (10-1000Hz) is required.

6.2 Diffusion Policy: The Standard for Stochastic Action Generation

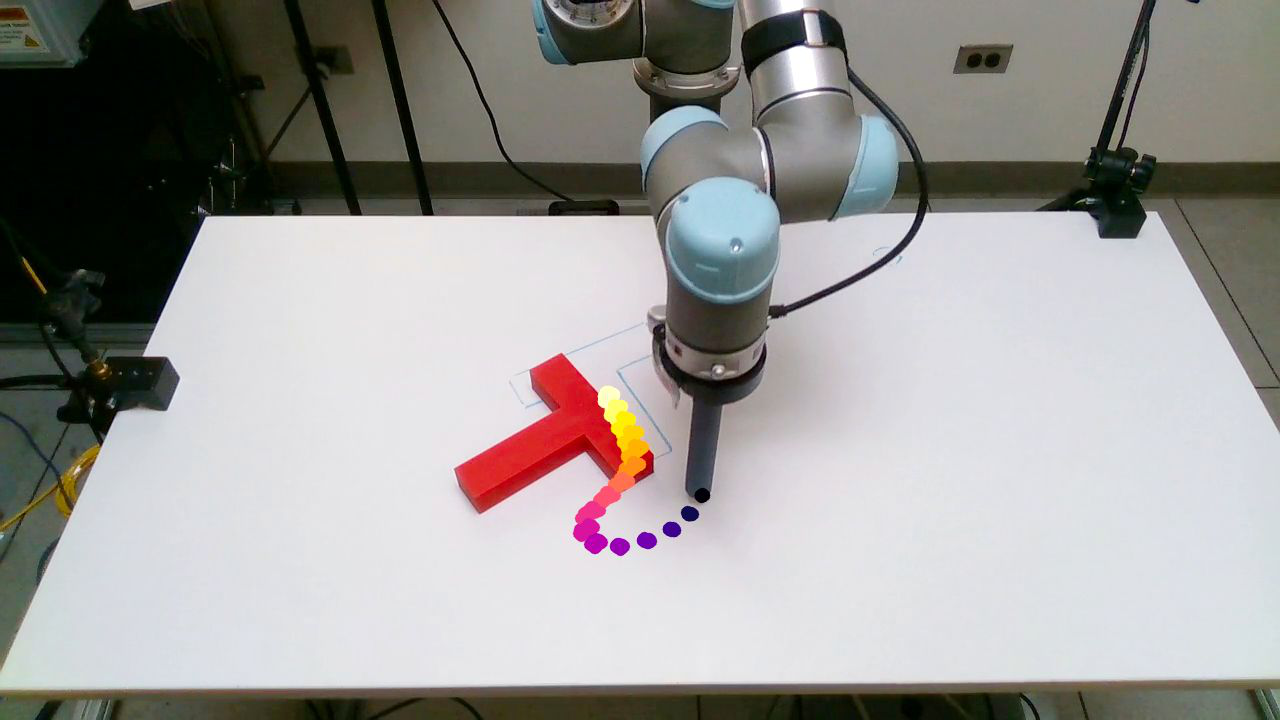

Diffusion Policy [Chi et al., 2023] generates robot actions through a conditional denoising diffusion process — starting from noise and progressively refining to produce observation-conditioned action sequences.

Its three key strengths are:

Multimodal action distribution handling. There are multiple valid ways to grasp a cup — from above, from the side, by the handle. Deterministic policies average across these modes, producing an action that belongs to none. Diffusion Policy selects one valid mode, generating a coherent action.

High-dimensional action space support. The diffusion process scales effectively with action dimensionality, suiting multi-joint robots and dexterous manipulation.

Action chunking. A single inference generates a sequence of actions across multiple timesteps, reducing inference frequency while maintaining temporal coherence. This technique became the low-level policy backbone of subsequent VLAs (Octo, GR00T N1).

The result — an average 46.9% improvement over prior SOTA across 12 tasks — was decisive. While not a VLA itself, Diffusion Policy formed the low-level backbone of pi0, Octo, and GR00T N1, undergirding the entire field.

6.3 3D Diffuser Actor: Integrating 3D Spatial Awareness

3D Diffuser Actor [Ke et al., 2024] overcomes Diffusion Policy's 2D observation limitation by directly incorporating point clouds and 3D scene representations as policy inputs, enabling depth information and 3D geometric reasoning.

The importance of 3D representations is especially pronounced in manipulation. For "pick up the cup," 2D images alone make it difficult to accurately determine distance to the cup, 3D shape, and spatial relationship between gripper and cup. Point clouds provide this information directly.

However, 3D representations carry costs: depth sensor noise, computational expense of point cloud processing, and field-of-view limitations from sensor placement.

6.4 DROID: Large-Scale In-the-Wild Data

DROID [Khazatsky et al., 2024] tackles low-level policy generalization through data scale. With 76,000 trajectories from 13 institutions across 564 scenes, it is the largest in-the-wild robot manipulation dataset in history.

DROID's core value is internalizing environmental diversity. Data collected from diverse environments worldwide naturally encompasses variations in lighting, backgrounds, object types, and table heights, improving policy generalization. DROID served as training data for Octo and OpenVLA, forming the data foundation of the open-source VLA ecosystem alongside Open X-Embodiment (see Chapter 4).

6.5 Design Axes of Low-Level Control

Current low-level control involves three key design decisions:

| Axis | Options | Tradeoff |

|---|---|---|

| Action generation | Diffusion / Flow matching / Auto-regressive | Multimodal distribution vs generation speed vs training efficiency |

| Observation representation | 2D image / Depth / Point cloud / Tactile | Information richness vs computation vs sensor availability |

| Temporal resolution | Single-step / Action chunking / Trajectory | Responsiveness vs coherence vs inference cost |

pi0's flow matching enables faster generation than diffusion; FAST's DCT-based tokenization achieves high-frequency precision even with auto-regressive generation; GR00T N1 implements System 1 with a diffusion transformer. The optimal combination has not yet converged.

6.6 Comparison with Agentic Coding: A Domain Without Counterpart

Low-level control is the topic in this book most distant from Agentic Coding. Three points explain why this difference is fundamental.

Deterministic execution vs stochastic policies. Every action of a code agent — file reads, code edits, test runs — is deterministic. Same input, same result. Diffusion Policy explicitly learns stochastic policies. This is an honest acknowledgment of physical-world uncertainty, and unnecessary complexity in the digital world.

Discrete actions vs continuous control. Code agent actions are discrete — "open file," "call function," "edit line." Start and end are clear. Robot low-level control is continuous — joint torques or velocities must be output continuously at Hz-level rates. When "the cup has been grasped" is complete is unclear; defining action boundaries themselves is the problem.

The absence of contact dynamics. Code has no "contact." Calling an API returns a result. In robot low-level control, physical contact with objects is central. Force distribution, friction, and deformation at the moment of contact are only partially observable through sensors, and minute differences separate success from failure. This is why robot manipulation is "the last-centimeter problem."

Because of these three differences, Agentic Coding's success strategies (unlimited iteration, instant feedback, deterministic verification) cannot be directly applied to low-level control. Instead, what is needed are policies that are stochastic yet robust, integration of real-time sensory feedback, and simulation-based pre-verification.

6.7 Open Problems and Outlook

Three core open problems in low-level control:

Real-time inference speed. A gap remains between diffusion/flow matching generation speed and robot control rates (100Hz+). Action chunking mitigates this, but long chunks reduce responsiveness. Edge device inference optimization (quantization, pruning, distillation) is the practical path forward (see Chapter 10, Open Problem 7).

Tactile feedback integration. Most current policies rely on visual input. But for precision manipulation (grasping eggs, folding cloth, tightening screws), tactile feedback is essential. Research integrating tactile sensor data into diffusion policies remains in early stages.

Contact-rich manipulation. Tasks with rich contact — opening doors, pushing drawers, assembling objects — where contact dynamics must be accurately modeled and controlled remain extremely challenging. Current VLAs and diffusion policies are most successful on relatively simple pick-and-place; extending to contact-rich manipulation is the next frontier.

The most promising direction is combination with hierarchical approaches (see Chapter 5). A division where high-level VLMs decide "what to grasp and where" while low-level diffusion policies handle the precision control of "how to grasp" is currently most effective. HAMSTER [Li et al., 2025] and GR00T N1 [NVIDIA, 2025] exemplify this direction.

References

- Chi, C. et al., "Diffusion Policy: Visuomotor Policy Learning via Action Diffusion," arXiv:2303.04137, 2023. scholar

- Ke, T. et al., "3D Diffuser Actor: Policy Diffusion with 3D Scene Representations," arXiv:2402.10885, 2024. scholar

- Khazatsky, A. et al., "DROID: A Large-Scale In-The-Wild Robot Manipulation Dataset," arXiv:2403.12945, 2024. scholar

- Black, K. et al., "π0: A Vision-Language-Action Flow Model for General Robot Control," arXiv:2410.24164, 2024. scholar

- FAST, "Efficient Action Tokenization for Vision-Language-Action Models," arXiv:2501.09747, 2025. scholar

- NVIDIA, "GR00T N1: An Open Foundation Model for Generalist Humanoid Robots," arXiv:2503.14734, 2025. scholar

- Li, J. et al., "HAMSTER: Hierarchical Action Models for Open-World Robot Manipulation," arXiv:2502.05485, 2025. scholar

- Ghosh, D. et al., "Octo: An Open-Source Generalist Robot Policy," arXiv:2405.12213, 2024. scholar

- Kim, M. J. et al., "OpenVLA: An Open-Source Vision-Language-Action Model," arXiv:2406.09246, 2024. scholar

- Open X-Embodiment Collaboration, "Open X-Embodiment: Robotic Learning Datasets and RT-X Models," arXiv:2310.08864, 2023. scholar