Chapter 2: LLM as Planner — Zero-Shot Planning and Grounding

Summary

In 2022, pretrained LLMs were shown to serve as high-level planners for robots. This transition progressed through three stages — generation, grounding, and structuring — each precisely targeting the limitations of its predecessor. However, the fundamental inadequacy of text-only control for the physical world became clear, triggering two successor paradigms: Code as Policy and VLA.

2.1 Introduction: The Discovery of World Knowledge in LLMs

In early 2022, researchers made a striking discovery. LLMs trained on internet text could decompose instructions like "make breakfast" into action sequences such as "open fridge, take out milk, close fridge, ..." This capability was not explicitly trained but emerged from "world knowledge" implicitly encoded in internet text.

This discovery transformed robot planning. Previously, experts had to manually design formal planning systems like PDDL or behavior trees. Each new task required new planning logic, creating a fundamental bottleneck for general-purpose robotics. LLMs showed the potential to bypass this bottleneck entirely.

Yet the question "can LLMs plan?" quickly evolved into the harder question: "can those plans actually be executed in the physical world?" The trajectory of that evolution is the central thread of this chapter.

2.2 Language Models as Zero-Shot Planners: The Starting Point

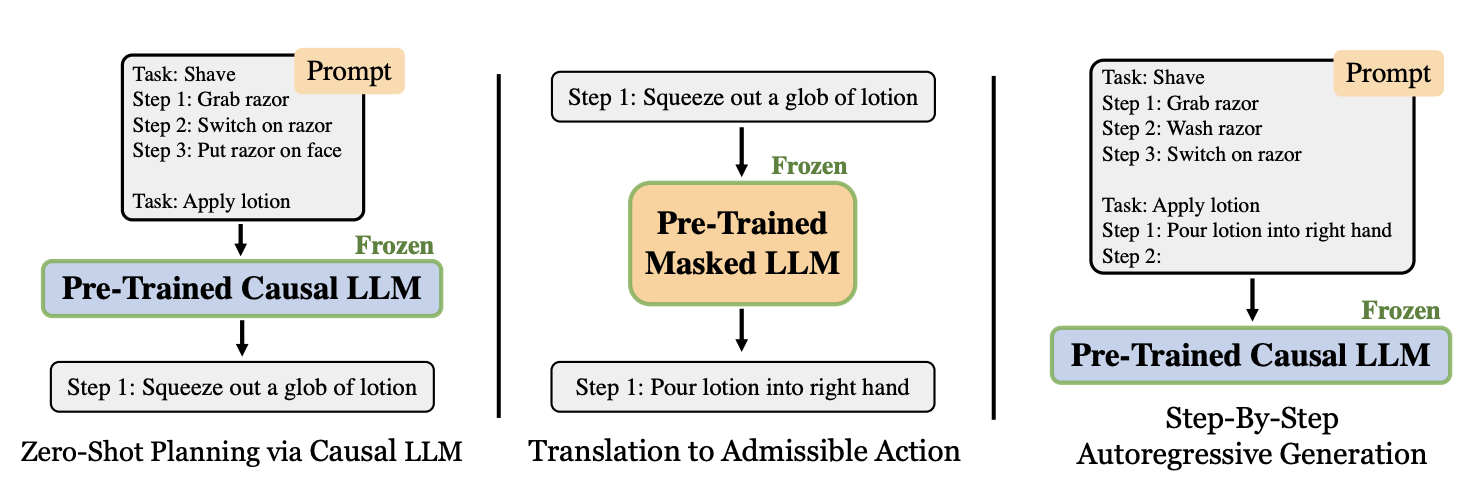

[Huang et al., 2022] is the origin point for LLM-based robot planning.

Three key techniques were introduced. Admissible Action Parsing semantically maps the LLM's free-form output to the environment's set of permissible actions. Autoregressive Trajectory Correction uses the outcome of previous actions as feedback to autoregressively correct subsequent actions. Dynamic Example Selection dynamically selects the most similar examples for few-shot prompting.

The results were both encouraging and revealing. Executability improved dramatically from 18% to 79%, but semantic correctness remained at just 32.87% (LCS metric). Executable but incorrect plans — this previewed the fundamental dilemma of LLM planners.

The most critical limitation was the absence of grounding. The LLM could not observe the current state of the physical environment. It might plan "take milk from the fridge" when no milk exists. Unlike code agents that can instantly read the file system, this observation-action gap was the first clear evidence of a fundamental challenge in physical planning.

2.3 SayCan: Grounding in the Physical World

SayCan [Ahn et al., 2022] directly attacked the grounding problem by combining the LLM's semantic knowledge ("Say") with a robot affordance function ("Can").

The core idea is a product of probabilities:

The LLM scores each skill's usefulness for the task, while the affordance function scores each skill's feasibility in the current environment. The action with the highest combined score is selected from a library of 551 pretrained robot skills.

Evaluated on 101 tasks in a real kitchen environment, SayCan with PaLM 540B achieved 84% planning success and 74% execution success, reducing the error rate by 50% compared to FLAN. It successfully executed tasks requiring up to 8 steps, including contextual requests like "I spilled coke on the table, how can you help me clean it up?"

The "Say + Can" paradigm became the standard framework for subsequent research. It is the direct ancestor of Google's robot foundation model lineage — RT-1, RT-2, PaLM-E — and was scaled to fleet management with AutoRT (2024) (see Chapter 8).

However, SayCan's limitations were also clear: a closed skill set of 551 skills requiring additional training to extend, static affordances that could not reflect real-time environmental changes, and limited replanning upon failure. The contrast with Agentic Coding is stark — adding a new tool requires only a tool description, not retraining.

2.4 SayPlan: Structuring the Environment Representation

SayPlan [Rana et al., 2023] directly targeted SayCan's scale limitations. Its key insight: the environment representation determines the scale of LLM planning.

SayPlan exploits the hierarchical structure of 3D Scene Graphs (3DSG) — building, floor, room, object. Rather than feeding the entire graph to the LLM, it performs Hierarchical Semantic Search, selectively expanding only the task-relevant subgraph. Integration with a classical path planner reduces the LLM's planning horizon, and an Iterative Replanning Pipeline corrects infeasible actions.

The results were impressive: successful long-horizon task planning in a 3-floor building with 36 rooms and 140+ objects. Iterative replanning corrected most hallucination errors, though 6.67% of tasks retained uncorrectable hallucinated nodes.

SayPlan's most important legacy is establishing the scene graph + LLM planning combination. This combination feeds directly into KARMA's long-term memory system [Wang et al., 2024], Embodied-RAG's spatial-semantic retrieval [Xie et al., 2024], and VeriGraph's execution verification [Ekpo et al., 2024] (see Chapter 7).

2.5 The Evolutionary Arc: From Generation to Structure

The evolutionary trajectory across these three papers is clear:

| Stage | Key Achievement | Remaining Limitation |

|---|---|---|

| Generation (LLM as Planners) | LLMs can generate plans | Not grounded in physical world |

| Grounding (SayCan) | Affordances provide grounding | Limited space, static environment |

| Structuring (SayPlan) | Scene graphs enable scaling | No dynamic environment tracking, residual hallucination |

Each stage precisely resolved its predecessor's core limitation while exposing new ones. The challenges that all three stages left unresolved triggered subsequent research directions:

- "What if plans were expressed as code?" — Code as Policy (see Chapter 3)

- "What if the LLM directly output actions?" — VLA (see Chapter 4)

- "What if high-level planning and low-level control were separated?" — Hierarchical Planning (see Chapter 5)

- "What if we could remember dynamic environments?" — Memory & Scene Graph (see Chapter 7)

- "What if we could detect and recover from failures?" — Agentic Systems (see Chapter 8)

2.6 Comparison with Agentic Coding: The Determinism of Tool Calls

The core problem these three papers wrestle with is grounding — connecting LLM plans to the physical world. Examining why this problem does not arise in Agentic Coding reveals the fundamental difference.

When an LLM in Agentic Coding plans "read the file," the command cat file.txt executes instantly, deterministically, and completely. Tool availability is verifiable through API documentation or type signatures, and results are immediately returned as text. Tool calls are deterministic — that is the key.

In SayCan, whether a robot skill can be executed depends on the physical environment state and requires a separately trained affordance model. The same "pick up cup" skill has varying success probabilities depending on the cup's position, orientation, and surface properties. Tool calls are stochastic. SayPlan's introduction of 3D scene graphs is structurally similar to drilling down from tree to a specific file to a specific function in a codebase, but constructing and maintaining a physical scene graph is expensive and requires constant updates.

This difference is not merely technical. The determinism of digital tool calls enables infinite "try, fail, retry" iterations. The stochasticity of physical execution means each attempt carries cost and risk, requiring "estimate success probability before trying." SayCan's affordance scoring was the first attempt at precisely this estimation.

2.7 Open Problems and Outlook

The LLM Planner paradigm leaves three fundamental open problems.

First, the absence of closed-loop replanning. None of the three papers offer robust recovery from execution failures. SayPlan's iterative replanning is the most advanced attempt, but it does not address physical execution failures. This challenge is taken up by REFLECT [Liu et al., 2023] and BUMBLE [Shah et al., 2024] (see Chapter 8).

Second, dynamic environment tracking. All three papers assume static or quasi-static environments. There is no mechanism to detect and incorporate changes made by other people or robots. KARMA's short-term memory system [Wang et al., 2024] represents the first step in this direction (see Chapter 7).

Third, complete hallucination elimination. SayPlan's residual 6.67% hallucination rate reveals an inherent limitation of LLM-based planning. AutoTAMP's formal verification [Chen et al., 2023] and VeriGraph's scene-graph-based verification [Ekpo et al., 2024] address this but do not achieve complete solutions (see Chapters 5 and 7).

The conclusion of a recent survey [2025] is clear: LLMs are inadequate as standalone planners but powerful when combined with traditional planning methods. The hybrid approach — combining LLM flexibility with the rigor of classical planning — is the most promising direction, explored further in the hierarchical planning of Chapter 5.

References

- Huang, W. et al., "Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents," arXiv:2201.07207, 2022. scholar

- Ahn, M. et al., "Do As I Can, Not As I Say: Grounding Language in Robotic Affordances," arXiv:2204.01691, 2022. scholar

- Rana, K. et al., "SayPlan: Grounding Large Language Models using 3D Scene Graphs for Scalable Robot Task Planning," arXiv:2307.06135, 2023. scholar

- Liu, Z. et al., "REFLECT: Summarizing Robot Experiences for Failure Explanation and Correction," arXiv:2306.15724, 2023. scholar

- Wang, Z. et al., "KARMA: Augmenting Embodied AI Agents with Long-and-short Term Memory Systems," arXiv:2409.14908, 2024. scholar

- Xie, Q. et al., "Embodied-RAG: General Non-parametric Embodied Memory for Retrieval and Generation," arXiv:2409.18313, 2024. scholar

- Ekpo, D. et al., "VeriGraph: Scene Graphs for Execution Verifiable Robot Planning," arXiv:2411.10446, 2024. scholar

- Chen, Y. et al., "AutoTAMP: Autoregressive Task and Motion Planning with LLMs as Translators and Checkers," arXiv:2306.06531, 2023. scholar

- Shah, M. et al., "BUMBLE: Unifying Reasoning and Acting with Vision-Language Models for Building-wide Mobile Manipulation," arXiv:2410.06237, 2024. scholar

- Brohan, A. et al., "AutoRT: Embodied Foundation Models for Large Scale Orchestration of Robotic Agents," arXiv:2401.12963, 2024. scholar

- Survey, "A Survey on Large Language Models for Automated Planning," arXiv:2502.12435, 2025. scholar